Canonical, the company behind Ubuntu, has announced official support for the new NVIDIA Jetson Thor family, strengthening its strategic collaboration with NVIDIA to accelerate innovation in generative AI, humanoid robotics, and edge computing. With this integration, developers and enterprises will gain access to optimized Ubuntu images for Jetson Thor modules, with the reliability, security, and enterprise-grade support that Canonical is known for.

The announcement comes at a pivotal moment: as AI expands from the cloud toward smaller, closer-to-the-user devices, the challenge is to deliver increasingly powerful models in environments with limited energy resources, low-latency needs, and strict regulatory requirements.

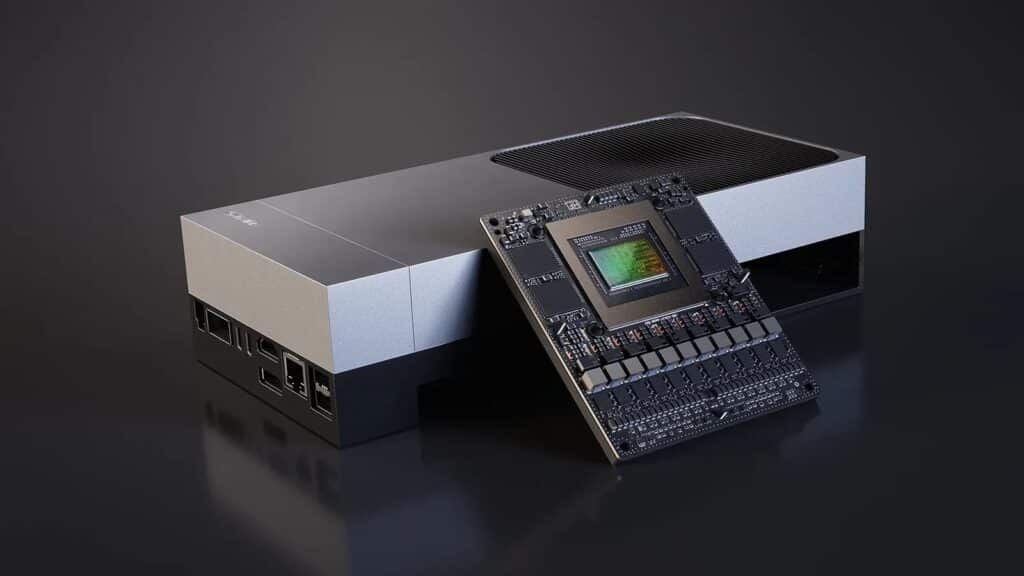

Jetson Thor: A giant leap beyond Orin

The new Jetson Thor generation redefines the standard for edge computing. Based on NVIDIA Blackwell architecture, it integrates up to 128 GB of memory and delivers 2,070 FP4 TFLOPS of AI compute, running the latest generative AI models effortlessly in a compact and power-efficient form factor.

Key performance highlights compared with Jetson Orin:

- 7.5x higher AI compute performance

- 3.5x greater energy efficiency

- Compact design able to power humanoid robots, medical devices, autonomous vehicles, or industrial systems.

The Jetson AGX Thor Developer Kit offers a full prototyping environment, while the Jetson Thor modules are designed for production hardware integration.

Ubuntu as a trusted foundation for the edge

Canonical confirmed that Jetson Thor will be supported with official Ubuntu 24.04 LTS (and future versions), including long-term security patches and updates.

Key benefits of Ubuntu support include:

- Enterprise-grade security and regulatory compliance: Continuous updates aligned with frameworks such as the EU Cyber Resilience Act, critical for sectors like healthcare, automotive, and defense.

- Certified stability: Each Ubuntu image undergoes over 500 hardware compatibility tests, ensuring reliability in production environments.

- Seamless development-to-deployment path: Developers can use the same Ubuntu stack on workstations, public clouds, and edge devices, enabling consistent large-scale deployments.

Canonical emphasized that this combination allows enterprises and startups to innovate without compromising security, resolving the classic dilemma: move fast or stay compliant. Now they can do both.

Unique capabilities for generative AI and autonomous robotics

Jetson Thor offers not only performance but also dedicated features for mission-critical applications:

- Real-time kernel: Guarantees deterministic latencies, essential in humanoid robotics and medical systems.

- Multi-Instance GPU (MIG): Splits a single GPU into secure, isolated instances to run multiple models simultaneously with guaranteed performance.

- Support for any GenAI model: JetPack 7 provides a powerful compute stack to run large language models (LLMs), vision-language models (VLMs), and action-based models like NVIDIA GR00T N.

- NVIDIA Holoscan: Enables ultra-low latency streaming of sensor data directly into the GPU, eliminating CPU bottlenecks for robotics, perception, or autonomous driving.

This makes Jetson Thor a true nerve center for physical AI, where the boundary between algorithms and the real world blurs.

Implications for developers

With official Ubuntu support, developers can now run state-of-the-art GenAI models locally, reducing dependence on the cloud. This brings three direct advantages:

- Lower inference costs.

- Minimal latency (critical in robotics and automotive).

- Stronger privacy, since data can remain on-device.

The ecosystem is also compatible with popular inference engines such as Ollama, vLLM, and Hugging Face, making adoption straightforward for developer teams.

A long-term strategy: democratizing edge AI

This collaboration is part of Canonical’s Silicon Partner Program, which certifies Ubuntu images for leading global hardware vendors. NVIDIA has been one of its key partners, with Jetson Thor being the latest milestone after Orin.

Both companies share the vision of a hybrid AI future: while the cloud remains critical, an increasing amount of workloads will run at the edge, near the user and physical devices.

With Ubuntu and Jetson Thor, Canonical and NVIDIA aim to democratize AI across industries that until now lacked secure, efficient, and standardized platforms.

What’s next: availability and deployment

Canonical confirmed that official Ubuntu images for Jetson Thor will be available on its download portal in the coming weeks.

Options will include:

- Images for the Developer Kit (prototyping and exploration).

- Images for production modules, ready for enterprise use.

- Ubuntu Core versions, designed for IoT and secure edge deployments.

The process will be simple: download the matching image, flash it onto USB or NVMe, and deploy it on the board.

Frequently Asked Questions (FAQ)

What is NVIDIA Jetson Thor?

It’s NVIDIA’s new edge AI and robotics platform. Built on Blackwell architecture, it delivers 2,070 FP4 TFLOPS, 128 GB of memory, and 3.5x energy efficiency compared to Orin.

What does Ubuntu official support bring?

It ensures long-term stability, enterprise-grade security patches, hardware certification, and compliance with regulations — reducing risks for industries like healthcare, automotive, and manufacturing.

Which applications will benefit the most?

Humanoid robotics, autonomous vehicles, medical devices, smart factories, and computer vision systems. Also edge GenAI applications, cutting cloud costs and latency.

When will Ubuntu images for Jetson Thor be available?

Canonical confirmed they will be released soon, covering both the developer kit and production environments.

Is it compatible with today’s AI models?

Yes. Jetson Thor supports frameworks like Hugging Face, vLLM, and Ollama, running models such as Llama 3.1, Qwen3, Gemma3, DeepSeek R1, as well as multimodal and vision-action AI.