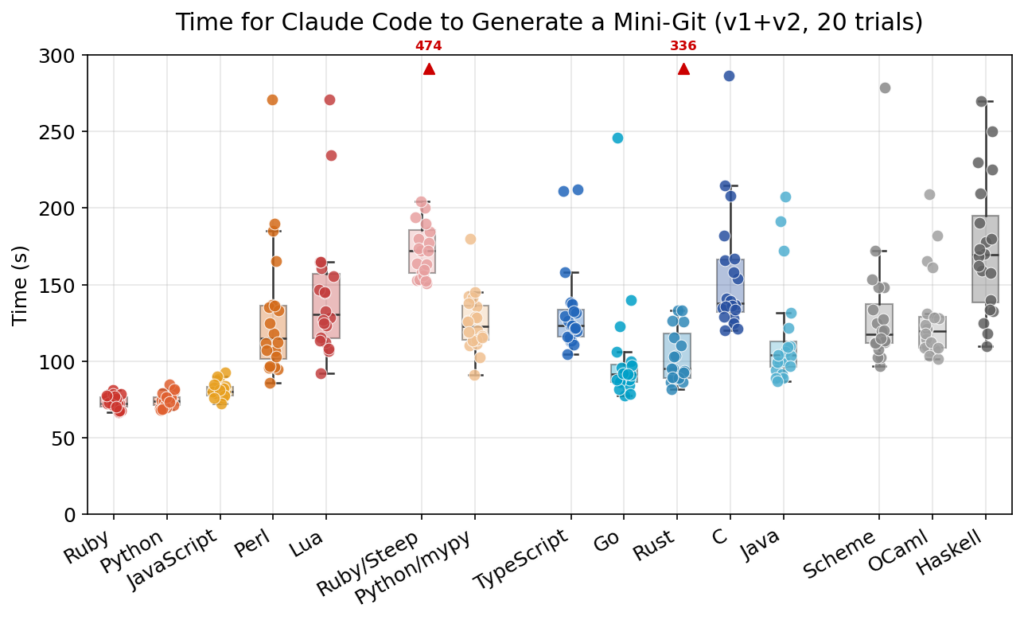

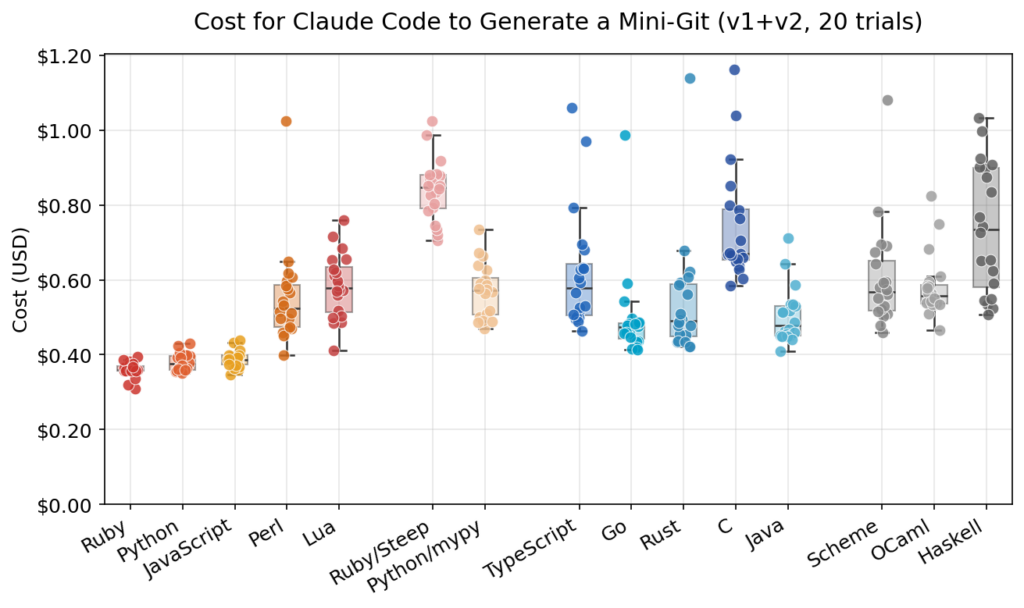

Claude Code has sparked a very practical question among developers and engineering teams: if the agent can write software in almost any language, which one should you actually choose to move faster, spend fewer tokens, and keep reliability high? A benchmark published this month by Yusuke Endoh, the author of mame/ai-coding-lang-bench, tried to answer that with hard data. The experiment asked Claude Code to build a simplified version of Git in 13 languages, with 15 total configurations once Python with mypy and Ruby with Steep were added, and repeated each setup 20 times using Claude Opus 4.6. That meant 600 runs in total.

The broad result is striking, even if it is far from the last word. Ruby, Python, and JavaScript came out as the fastest, cheapest, and most stable choices in this prototyping-style task. Go landed in a strong upper-middle position, clearly ahead of C, Haskell, TypeScript, and Lua, but still behind the top three. For Go engineers, the takeaway is not that the language is a bad fit for Claude Code. It is that, at least today, the agent appears to work more efficiently when the task is expressed in dynamic languages with less initial friction.

What this benchmark actually measures

This benchmark was not trying to decide which programming language is “best” in general. It was trying to measure which language works best with Claude Code in a specific development pattern. The task was a “mini-git” built in two phases: first init, add, commit, and log, then status, diff, checkout, reset, rm, and show. The prompt was intentionally simple, and the benchmark avoided external libraries by even using a custom hash instead of SHA-256, so language-level differences would stand out more clearly.

In that comparison, Ruby finished first at 73.1 seconds and an average cost of $0.36. Python came second at 74.6 seconds and $0.38. JavaScript followed at 81.1 seconds and $0.39. Go placed fourth with 101.6 seconds, an average cost of $0.50, 324 lines of code after the second phase, and a perfect 40/40 test pass rate. That is a respectable result, especially because Go did not fail a single run, something that did happen in Rust and Haskell. But it also makes one thing clear: in this experiment, Claude Code needed noticeably more time to reach the finish line in Go than it did in Ruby or Python.

There is another important caveat. The author explicitly says these conclusions are most meaningful for prototype-scale tasks. He also notes his own bias as a Ruby committer and points out that static typing may well show stronger advantages in larger projects, even though building a fair large-scale benchmark across so many languages is difficult. Runtime performance, frameworks, tooling, and ecosystem maturity also remain critical in any real engineering decision.

What the results say about Go

The most interesting point for Go teams is not simply that Ruby and Python won. It is how Go lost. Go does not show up as problematic or unreliable. It shows up as dependable, but less fluid for the coding agent in this kind of loop. It passed every test, tied Java on average cost, and clearly beat languages like TypeScript, C, and Haskell in total time. The weakness was speed and variance. Go averaged 101.6 seconds, but with a standard deviation of ±37.0 seconds, much higher than Ruby, Python, or JavaScript.

That suggests Claude Code can produce solid Go, but still needs more steps, more context, or more iteration to get there. In phase one, creating a fresh project in Go took 47.5 seconds on average, versus 33.2 seconds for Ruby and 32.9 seconds for Python. In phase two, extending the project took 54.1 seconds in Go, compared with 40.0 seconds in Ruby and 41.8 seconds in Python. That difference matters in the kind of short prompt-wait-revise-prompt loop that Claude Code encourages.

Still, it would be the wrong conclusion to say Claude Code is a poor match for Go. Go retains real advantages for production systems: strong typing, simplicity, excellent tooling, built-in support for concurrency, and a proven standard library. Many teams will gladly accept slightly slower code generation if the result fits their backend stack, deployment model, and operational preferences. A language choice is not only about what the agent can write fastest. It is also about what humans can maintain and what systems can run efficiently in production.

Types, tokens, and friction

One of the most revealing parts of the benchmark is what happened when strict typing was layered onto already familiar languages. Python with mypy --strict jumped from 74.6 to 125.3 seconds and from $0.38 to $0.57. Ruby with Steep rose even more dramatically, from 73.1 to 186.6 seconds and from $0.36 to $0.84. The author interprets this as a combination of additional syntactic overhead, more work for the agent, and possibly less model familiarity with certain type ecosystems.

That does not mean types are a bad idea. It means that, in March 2026 and for this benchmark, the agent appears to move more comfortably when the language lets it progress with less mandatory structure from the start. In fact, the only three failed runs across all 600 executions came from Rust and Haskell, two statically typed languages with concepts that may add cognitive load for the model. The benchmark does not prove that static typing reduces quality, but it does weaken the simplistic claim that types alone solve agentic coding mistakes.

For Go teams, this opens up a practical question. If your immediate goal is rapid iteration with Claude Code, quick validation of an idea, or low-friction prototyping, the benchmark suggests Ruby, Python, or JavaScript may currently be better companions. If your goal is to ship a concurrent, maintainable backend system aligned with modern infrastructure practices, Go still looks like a sensible choice, even if the agent takes longer to get there. The repository itself raises the possibility that the classic workflow still makes sense: prototype in a dynamic language, then migrate to a static one later. Whether agent-driven migration is mature enough to make that strategy routine is still an open question.

At a broader level, the benchmark shows that Claude Code does not erase language differences. It reshuffles them. Generation speed, token cost, flow stability, and model familiarity with a language’s syntax and ecosystem are becoming real productivity variables. For Go, that is not a defeat. But it is a warning that in the age of coding agents, language choice affects not only runtime performance and maintainability, but also how quickly and smoothly AI can collaborate with you.

FAQ

Which language performed best for Claude Code in this benchmark?

Ruby finished first, followed very closely by Python and then JavaScript. Those three were the fastest, cheapest, and most stable in the mini-git benchmark.

How did Go perform compared with Ruby and Python?

Go placed fourth, passed all tests, and delivered solid reliability, but it was slower and somewhat more expensive than Ruby and Python in this particular workflow.

Does this mean Go is a bad language for Claude Code?

No. It means Claude Code currently appears to prototype faster in dynamic languages. Go still delivered perfect test success in the benchmark and remains a strong choice for real production systems.

Can this benchmark decide a real-world stack on its own?

No. The experiment focuses on prototype-scale tasks. In larger systems, typing, runtime performance, ecosystem maturity, frameworks, and operational needs may change the picture significantly.