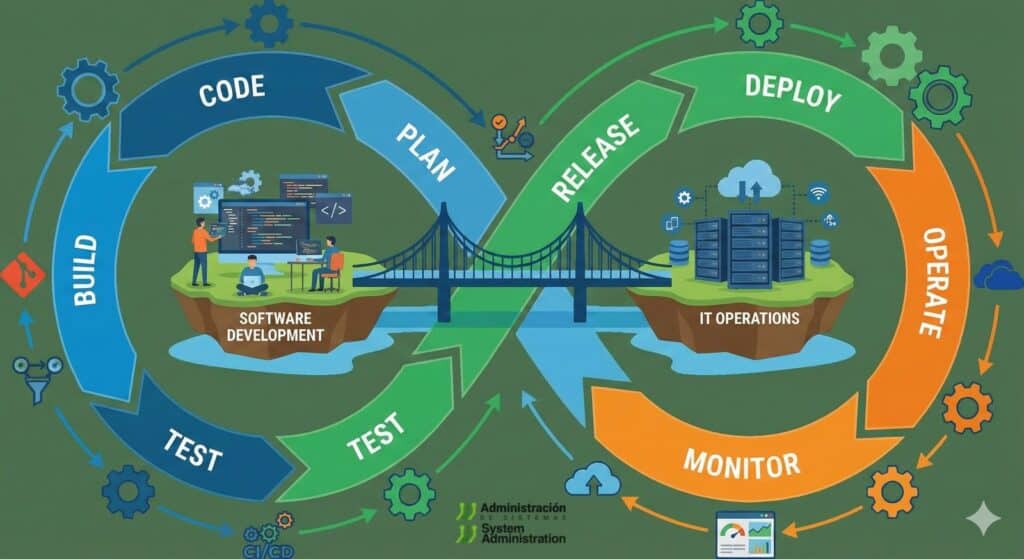

In today’s software world, writing good code is not enough. Teams need to ship fast, maintain quality and keep systems running smoothly. That’s where DevOps comes in: a way of working that breaks down the wall between development and operations, backed by a set of tools that automate almost the entire software lifecycle. From the first commit to production deployment, including testing, infrastructure and monitoring.

DevOps tools are no longer a “nice to have” for advanced teams. They are the foundation behind most modern digital products that depend on the cloud, frequent releases and continuous improvement.

What DevOps tools are and why they matter

When people talk about DevOps tools, they’re referring to a set of solutions that help to:

- Version code and collaborate without stepping on each other’s toes.

- Test and deploy changes continuously (CI/CD).

- Package applications into containers and run them at scale.

- Treat infrastructure as code, instead of configuring it manually.

- Monitor applications and infrastructure in real time.

- Coordinate team work with agile practices.

There’s no single “magic tool”. Instead, it’s a chain of tools that integrate with each other, forming what many call a “DevOps toolchain”.

Version control: the foundation of any DevOps team

Without version control, there is no DevOps. It’s the layer that allows multiple people to work on the same codebase without chaos.

Git and collaborative platforms

Git has become the de facto standard. It’s a distributed version control system, which means every developer has a complete copy of the repository and history on their own machine. This makes it easier to:

- Work offline.

- Create branches for new features without touching stable code.

- Review changes, roll back and compare versions.

On top of Git, platforms like GitHub, GitLab and Bitbucket add:

- A web interface to review code and changes.

- Pull/merge requests with peer reviews.

- Issue and task tracking.

- Integration with CI/CD pipelines.

In practice, these platforms become the daily hub: everything starts from the repository and ends up there again.

Continuous integration and delivery (CI/CD): deploying without fear

Continuous Integration (CI) and Continuous Delivery/Deployment (CD) sit at the heart of DevOps. The idea is simple: whenever someone pushes changes to the repository, the system:

- Builds the code.

- Runs automated tests.

- Produces deployable artefacts.

- Optionally deploys to test or even production environments.

Jenkins and GitLab CI/CD

In this area, two tools are especially common:

- Jenkins: a widely used, open-source automation server that lets teams build complex pipelines. It can compile code, run tests, generate container images or deploy to different environments, all orchestrated through jobs and declarative pipelines.

- GitLab CI/CD: built directly into GitLab. Pipelines are defined in a configuration file stored in the same repository as the code. This keeps application logic and deployment logic together, versioned and reviewable.

By automating these steps, teams reduce human error, catch problems earlier and lose the fear of shipping changes frequently.

Containers and orchestration: running the same app in any environment

Containers have reshaped how applications are packaged and run. The idea is to encapsulate code and its dependencies into a standard, reproducible unit.

Docker: packaging the application

Docker has become a must-have tool. It allows teams to:

- Build application images with everything needed to run.

- Ensure behaviour is the same in dev, staging and production.

- Reduce “it works on my machine” issues.

Developers define how the image is built in a configuration file, and from there the same image can run on any server or cloud that supports containers.

Kubernetes: managing containers at scale

When you need to run a lot of containers across multiple servers or nodes, Kubernetes comes into play, as the most widely adopted container orchestrator:

- It decides which node runs each container.

- Scales instances up or down based on load.

- Replaces failed containers with new ones.

- Simplifies progressive rollouts and updates with minimal downtime.

In a DevOps context, Kubernetes becomes the “operating system” for application infrastructure.

Portainer: a friendlier view

Tools like Portainer add a visual layer on top of Docker and Kubernetes. They’re especially useful for:

- Teams just getting started who want a graphical interface to see what’s running.

- Admins who need a quick overview of container status.

It helps flatten the learning curve and makes it easier to understand what’s happening under the hood.

Configuration management and Infrastructure as Code (IaC)

DevOps thinking doesn’t stop at application code. It also applies to infrastructure: servers, networks, load balancers, databases, and more.

Ansible: automating servers and configurations

Ansible lets teams describe, in human-readable text files (playbooks), how a system should be configured:

- Which packages to install.

- Which services to enable.

- Which configuration files to deploy.

With a single command, Ansible can enforce that configuration across many servers, ensuring they all look exactly the same. This reduces manual mistakes and makes it easy to reproduce environments (for example, spinning up a pre-production environment identical to production).

Terraform: defining infrastructure as code

Terraform goes a step further and lets teams define entire infrastructures as code:

- Virtual machines, networks and load balancers in the cloud.

- Managed databases.

- Storage, message queues and other services.

The configuration files describe the desired state, and Terraform creates, modifies or destroys resources to match it. Everything is versioned alongside the code, making audits, reviews and repeatable deployments much easier.

Monitoring and observability: seeing what’s happening in real time

Once applications are running in production, the job is far from done. DevOps also means measuring, watching and reacting quickly.

Prometheus: metrics and alerts

Prometheus is an open-source solution for collecting metrics:

- CPU, memory and disk usage.

- Request latency.

- Errors per second.

- Custom application metrics.

Metrics are stored in a time-series database and can be used to trigger alerts when something goes off the rails: spikes in errors, crashing instances, slow response times…

Grafana: dashboards and visualisation

Grafana has become the go-to tool for visualising metrics. It allows teams to:

- Build custom dashboards with charts, tables and alerts.

- Combine data from Prometheus with other sources (logs, databases, APM tools).

- Share dashboards with development, operations and even business stakeholders.

Together, Prometheus and Grafana form the backbone of observability in many DevOps setups.

Planning and collaboration: organising work around the code

DevOps is not just about technology; it’s also about how work is organised. Tools like Jira help teams:

- Manage tasks, user stories and incidents.

- Visualise work using Kanban or Scrum boards.

- Coordinate development, operations and business teams.

Integrated with repositories, CI/CD systems and support tools, Jira offers a unified view: what’s being developed, what’s been deployed, which bugs have been reported and where each item stands.

How to choose the right DevOps toolchain

Not every team needs every tool, or the same brands. When building a DevOps toolchain, it’s worth considering:

- Team size: smaller teams may prefer integrated platforms (repo + CI/CD + project management).

- Project type: a microservices app on Kubernetes is not the same as a monolith on a single server.

- Experience level: some tools come with a steeper learning curve.

- Environment (public cloud, private, hybrid): this shapes IaC and orchestration choices.

- Culture: DevOps requires shared responsibility, automation and documentation; without that culture, tools alone won’t fix anything.

A common path is to start with a solid foundation (Git + a repository platform + simple CI/CD) and then add containers, IaC and monitoring as the project grows.

Frequently asked questions about DevOps tools

1. What basic DevOps tools does a team just starting out really need?

A sensible starting point usually includes: Git for version control, a platform such as GitHub, GitLab or Bitbucket to host the code, a CI/CD tool (for example Jenkins or the built-in GitLab CI) and a minimal monitoring setup to collect basic application and system metrics.

2. Do we have to use containers and Kubernetes to “do DevOps”?

No. DevOps is a way of working based on collaboration and automation. Containers and Kubernetes make it easier to standardise deployments and scale, but teams can apply DevOps principles on traditional virtual machines as long as they automate integration, testing, deployments and monitoring.

3. What’s the practical difference between Ansible and Terraform?

Both are used in DevOps contexts, but they focus on different layers. Ansible is mainly about configuring systems and automating tasks (installing packages, deploying files, configuring services). Terraform is about defining and provisioning infrastructure (servers, networks, managed services) as code. They are often used together: Terraform builds the infrastructure, and Ansible configures it.

4. How does the choice of DevOps tools affect security?

Well-configured DevOps tools can actually improve security: they enable systematic code reviews, integrate vulnerability scanners into pipelines, control who can deploy and what gets deployed, and monitor suspicious behaviour. However, if they’re configured carelessly, they can open new attack paths (for example, leaked credentials in pipelines). That’s why security —often called “DevSecOps”— should be part of the strategy from day one whenever a DevOps toolchain is being designed.