AI security has moved well beyond theory. It is no longer enough for a model to produce good answers or for an agent to complete a task. Companies also need to know whether that system can leak data, be manipulated by malicious prompts, misuse tools, or drift outside the rules it is supposed to follow. That is the space where Promptfoo has gained serious traction: an open-source project built to evaluate, red-team, and scan AI applications for vulnerabilities before they reach production.

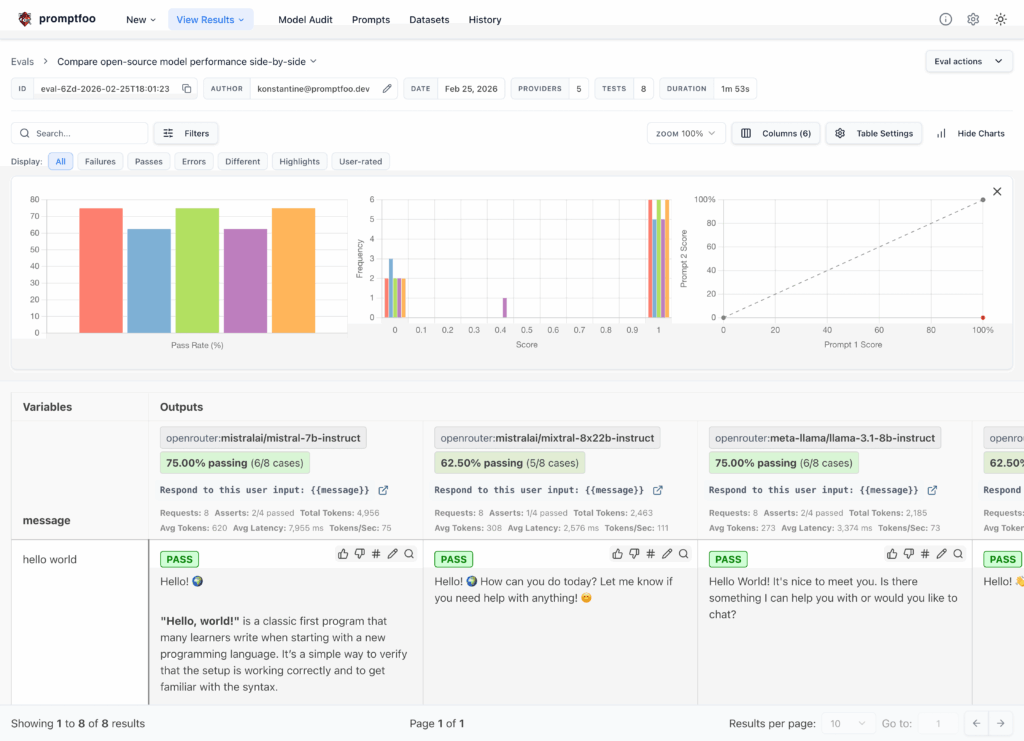

Promptfoo describes itself as a CLI and library for evaluating and red-teaming LLM applications. In practice, that means teams can use it to test prompts, agents, and retrieval-augmented generation systems, compare outputs across providers, automate checks in CI/CD, and generate security reports for AI-driven software. Its GitHub repository presents it as a way to move beyond trial and error and start shipping AI systems that are both more reliable and more secure.

That positioning helps explain why Promptfoo has become so visible in 2026. As enterprises move from AI demos to real deployments, the challenge is no longer just model quality. It is governance, repeatability, and risk control. Promptfoo fits that shift neatly because it turns AI testing into something structured and repeatable, rather than a vague manual exercise handled at the last minute. OpenAI’s decision to acquire the company this week only reinforces that point. OpenAI said it plans to integrate Promptfoo’s technology into OpenAI Frontier, its enterprise platform for building and operating AI coworkers.

Built for the stage after experimentation

Promptfoo is especially relevant for teams that have already moved beyond prototypes. Once an AI assistant or agent is connected to internal documents, databases, APIs, or business workflows, the real question changes. The issue is no longer whether the model “works” in a demo, but whether it behaves safely, consistently, and in a way that can be audited. That is exactly the gap Promptfoo is trying to fill. Its tooling lets developers define tests declaratively, run them from the command line, review results visually, and integrate those checks into the normal software delivery cycle.

The onboarding flow is deliberately straightforward. The official repository shows installation through npm, support for brew, the option to run commands through npx, and even installation via pip. From there, the basic pattern is to initialize an example project, run an evaluation with promptfoo eval, and inspect the outcome using promptfoo view. That simplicity matters because it lowers the barrier to adoption for engineering teams that want practical security testing without committing immediately to a large enterprise platform.

Another reason Promptfoo stands out is its broad provider support. Its GitHub documentation explicitly mentions side-by-side comparisons across OpenAI, Anthropic, Azure, Bedrock, Ollama, and other model ecosystems. That makes it useful not just as a red-teaming tool, but also as an evaluation layer for teams trying to benchmark performance, reliability, and safety across multiple vendors. In other words, Promptfoo sits at the intersection of quality assurance, application security, and model evaluation.

Why Promptfoo matters now

The timing of Promptfoo’s rise is not accidental. The growth of AI agents has expanded the attack surface. An application that can read documents, call tools, query internal systems, and make decisions autonomously creates a very different security problem from a simple chatbot. Promptfoo’s own documentation focuses heavily on risks such as prompt injection, jailbreaks, data exfiltration, tool misuse, and policy-violating behavior, all of which have become central concerns for teams deploying AI in real business environments.

That concern is no longer limited to a niche corner of security engineering. OpenAI’s acquisition announcement says Promptfoo is trusted by more than 25% of Fortune 500 companies, alongside a widely used open-source CLI and library for evaluating and red-teaming LLM applications. That figure comes from OpenAI’s own statement, so it should be read as a company claim rather than an independently audited market statistic, but it still signals how strategically important this category has become.

OpenAI’s messaging around the acquisition is revealing in its own right. The company said Promptfoo brings deep expertise in evaluating, securing, and testing AI systems at enterprise scale, and that these capabilities will become a native part of Frontier. That suggests a broader market shift: AI security tooling is moving from being a separate specialist layer into becoming a built-in expectation of enterprise AI platforms.

Open source roots, enterprise relevance

What makes Promptfoo especially interesting is that it still feels like an open-source developer tool first. It is released under the MIT license, has public documentation, and follows patterns familiar to engineers: command-line usage, config-driven tests, CI/CD hooks, and collaborative review of results. That matters in a market increasingly crowded with closed AI governance suites and proprietary observability layers. Promptfoo earned attention by being practical, transparent, and easy to adopt.

Its appeal also comes from what it does not try to be. Promptfoo is not the model, not the orchestration framework, and not the agent platform itself. It is the layer that helps answer a harder question: whether those systems can be trusted before they are released into production. In an AI ecosystem full of noise, that narrower focus may be exactly why it has become so important.

For now, OpenAI says the open-source project will continue even after the acquisition closes, while integrated enterprise features will advance inside Frontier. If that promise holds, Promptfoo could end up with a dual future: remaining an important open tool for developers while also becoming part of a larger enterprise AI control stack. Either way, its rise says something important about the market. In 2026, testing and red-teaming AI are no longer optional extras. They are becoming part of the minimum standard for shipping serious AI software.

Frequently asked questions

What is Promptfoo used for?

Promptfoo is an open-source CLI and library used to evaluate prompts, agents, and RAG systems, and to run red-teaming and vulnerability scanning against AI applications.

Does Promptfoo only work with OpenAI models?

No. Its documentation and GitHub repository list support for multiple providers and environments, including OpenAI, Anthropic, Azure, Bedrock, and Ollama.

Can Promptfoo be integrated into CI/CD pipelines?

Yes. One of its core use cases is automating AI quality, security, and compliance checks inside normal development and deployment workflows.

Will Promptfoo remain open source after OpenAI acquires it?

According to OpenAI, yes. The company said it will continue building the open-source project while expanding integrated enterprise capabilities inside OpenAI Frontier.