In systems administration and software development, the real issue with MCP is not that it is “dead,” but that it has stopped being a simple novelty and has become a piece of infrastructure that needs to be designed, governed, and audited. The project itself still has an active 2026 roadmap focused on four very specific areas: transport scalability, agent-to-agent communication, governance maturity, and enterprise readiness. Its maintainers also stress that MCP is already being used in production by both large and small companies and has evolved well beyond simply connecting local tools.

That changes the conversation entirely. For a sysadmin or a developer, MCP should not be evaluated as hype, but as an additional layer of transport, discovery, permissions, and operations. And that is where the uncomfortable truth lies: one more layer can bring order when there are many tools and many clients, but it can also introduce latency, operational complexity, and security risks if it is deployed without a clear need. OpenAI itself notes, for example, that exposing too many tools from an MCP server can increase both costs and latency, and recommends filtering allowed tools when the full catalog is not needed.

The standard is still growing, but it is no longer the answer to everything

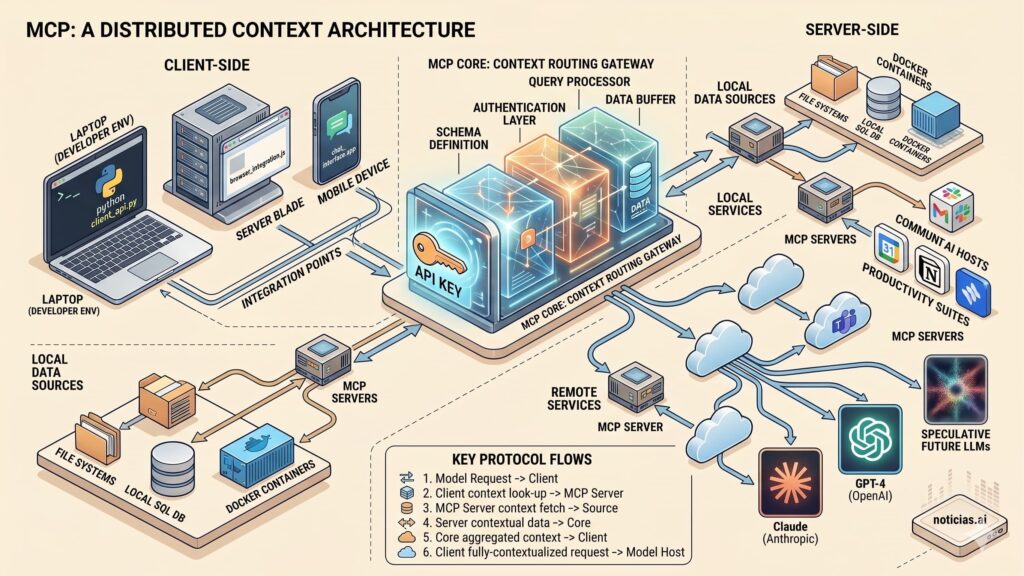

The official documentation describes MCP as an open standard for connecting AI applications with external systems, from databases and local files to search engines, calculators, or complete workflows. It also makes it clear that the ecosystem is not small: the protocol claims support in assistants such as Claude and ChatGPT, as well as developer tools such as Visual Studio Code and Cursor. On paper, that makes it attractive for teams that want to build once and integrate across multiple clients.

The problem is that this interoperability advantage is not free. The 2026 roadmap openly acknowledges that remote transport with Streamable HTTP has unlocked a new wave of production deployments, but has also exposed concrete limitations: stateful sessions that clash with load balancers, horizontal scaling challenges, and the lack of a standard way to discover server capabilities without first connecting. When the roadmap itself prioritizes exactly those points, the message is clear: the standard is moving forward, yes, but it is still maturing in the areas that matter most for real operations.

In Claude Code, MCP is already part of the workflow, but with serious warnings

Anthropic is not moving away from MCP; if anything, it is integrating it more explicitly into Claude Code. Its documentation states that MCP servers can be configured in several ways and that, for remote servers, HTTP is the recommended option and the one most widely supported for cloud services. It also allows shared project-level configuration through a .mcp.json file in the repository root, designed to live in version control so the whole team can work from the same tool definitions.

That makes a lot of sense for development teams, but it also introduces new responsibilities. Anthropic explicitly warns that third-party MCP servers are used at your own risk, that it has not verified the security of all of them, and that users should be especially cautious with servers that can retrieve untrusted content, because they may expose users to prompt injection. Claude Code also documents deployment paths designed for IT-managed setups through managed-mcp.json files stored in system locations that require administrator privileges. That alone makes one thing obvious: MCP is already being treated as something governed by IT, not just as a toy for developers.

OpenAI is embracing it too, but pushes approvals and tighter controls

OpenAI is doing something similar from another angle. Its Responses API supports MCP tools through remote servers compatible with Streamable HTTP or HTTP/SSE, and the company explains that the model can list tools, invoke them, and receive their output inside the conversation context. It also specifies that users are billed only for the tokens consumed when importing tool definitions or making tool calls, not for the tool invocation itself.

However, OpenAI also sends a very revealing signal for platform and security teams: by default, the API requires prior approval before data is shared with a connector or a remote MCP server. It also recommends carefully reviewing what information is sent to those servers, logging that activity, and, whenever possible, choosing official servers operated by the service provider rather than third-party proxies. OpenAI’s documentation also reminds users that, while MCP usage can remain compatible with Zero Data Retention and Data Residency features on OpenAI’s side, MCP servers are still third-party services, and their own data retention and residency policies still apply. For any sysadmin, that sentence alone is enough to understand that the perimeter does not end at the model vendor.

The right decision is not “MCP yes or no,” but “where and for what”

For a site aimed at sysadmins and developers, the useful conclusion is not that MCP is dead, but that it should no longer be the default choice. When the use case is narrow, the integration is stable, and the objective is tightly scoped, a direct API integration will still be simpler to deploy, monitor, and secure. When the goal is to package an app-like experience inside ChatGPT, OpenAI also says that the Apps SDK is the recommended route for publishing those experiences, including ones backed by MCP tools. That is an important clue: even OpenAI draws a distinction between using MCP as a backend tool layer and using a dedicated SDK when the real goal is to build a distributable application experience.

MCP starts to justify its complexity when an organization truly needs a shared layer across multiple clients, multiple tools, and multiple teams, complete with versioned configuration, access policies, and cross-platform reuse. In that context, it can function as a common tool bus for AI systems. But if it is deployed to solve a problem that was already neatly solved with an internal API, the likely outcome is another failure domain, another source of latency, and another component that needs auditing. That is a reasonable inference from how Anthropic and OpenAI document MCP today: both support it and promote it, but both also fill their guides with warnings about approvals, allowlists, tool filtering, logging, and server security.

What systems and development teams should look at first

Before deploying MCP seriously, a technical team should answer at least four questions. First, does it genuinely need interoperability across multiple clients, or is this only a one-off integration? Second, what data will leave the perimeter, and to which servers exactly? Third, how will shared configuration be governed, whether through .mcp.json for development teams or managed files for enterprise-wide rollouts? And fourth, what controls will be applied around allowlists, approvals, tool filtering, and activity logs? None of that is theoretical: it is already spelled out in the operational documentation for Claude Code and OpenAI.

The reality, then, is far less dramatic than the headline “MCP is Dead” and far more technical. MCP is not dead. What has died is the fantasy that you could just plug it in and forget about it. In 2026, MCP is starting to look like what it really is: a powerful integration layer that is useful in the right place and risky when deployed without platform discipline.

FAQ

Is MCP still relevant for sysadmins and developers in 2026?

Yes. The protocol still has an active roadmap, real production usage, and support across major clients and APIs. What has changed is that it now requires more platform design, more governance, and stronger operational controls.

Does Claude Code recommend a specific transport for remote servers?

Yes. Anthropic states that HTTP is the recommended option for connecting remote MCP servers and that it is the most widely supported transport for cloud services.

What security risks are the vendors themselves highlighting?

Anthropic warns about the risks of using unverified third-party MCP servers and explicitly mentions exposure to prompt injection. OpenAI recommends prior approvals, official servers where possible, logging, and careful review of any data sent to remote servers.

What does OpenAI recommend when the real goal is to publish an app in ChatGPT?

OpenAI says the Apps SDK is the recommended path for building and publishing app experiences, even when the app uses tools backed by MCP.