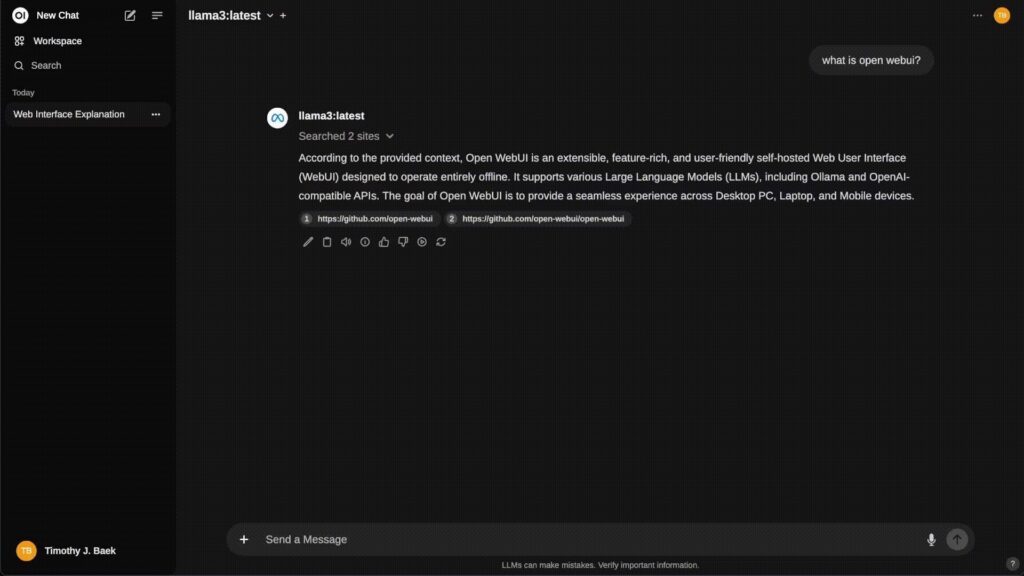

While everyone talks about models and APIs, one key piece is often overlooked: the interface and platform where your users will actually work with AI. That’s where Open WebUI comes in – an open-source frontend that has quickly become one of the most polished and capable ways to work with local models (via Ollama) and any OpenAI-compatible API… all self-hosted, extensible, and ready to run fully offline.

If you’re thinking about deploying your own “internal ChatGPT” on-premises or in your own cloud, Open WebUI is, right now, one of the strongest candidates.

What exactly is Open WebUI?

Open WebUI is a self-hosted AI web platform built around a few core ideas:

- It runs entirely on your own infrastructure (server, NAS, homelab, Kubernetes…).

- It integrates with:

- Ollama (local models such as Llama, Phi, Qwen, etc.).

- Any OpenAI-compatible API (OpenAI, Groq, Mistral, OpenRouter, LM Studio, and more).

- It includes:

- Multi-model chat.

- Built-in local RAG (document ingestion and libraries).

- Web browsing, external search, image generation.

- User management, groups, permissions, and a plugin system.

In practice, it’s the user experience layer that many self-hosted LLM deployments were missing.

Key strengths for admins and enterprises

1. Self-hosted and offline-friendly

Open WebUI is designed for environments where:

- You don’t want (or are not allowed) to send data to external services.

- You need the tool to work on segmented or isolated networks.

You can deploy it with:

- Docker (the most common and easiest path).

- Python/pip (native installation).

- Kubernetes (manifests, kustomize, Helm).

There’s also offline mode (HF_HUB_OFFLINE=1) so it won’t attempt to download models from the internet, which is crucial for locked-down environments.

2. Flexible integration with local and remote models

Open WebUI doesn’t lock you into a single provider:

- Ollama

- You can run the standard container and point it to an existing Ollama server.

- Or use an “all-in-one” image that bundles Open WebUI + Ollama in the same container (with or without GPU).

- OpenAI-compatible APIs

By setting the base URL and API key, you can connect to:- OpenAI

- GroqCloud

- Mistral

- OpenRouter

- LM Studio

- And basically any OpenAI-compatible backend.

That means you can swap models or providers without changing the interface, and even use multiple models in parallel depending on the use case.

3. User management, permissions and enterprise readiness

For serious deployments, identity and access control are non-negotiable. Open WebUI ships with:

- RBAC (Role-Based Access Control):

- Define who can use which models.

- Restrict model creation/pulling to admins only.

- Granular groups and permissions, tailored for multi-team environments.

- SCIM 2.0 support:

- Integrate with Okta, Azure AD, Google Workspace, etc.

- Automate user and group provisioning and lifecycle.

In other words, it’s not “just a pretty chat UI” – it’s a piece that can fit into your corporate identity architecture.

4. Local RAG, web browsing and integrated search

Out of the box, Open WebUI includes features that normally require stitching together several components:

- Local RAG:

- Upload documents directly to a chat.

- Create reusable document libraries.

- Reference files with

#before your query.

- Web search for RAG:

- Supports providers like SearXNG, Google PSE, Brave, DuckDuckGo, Tavily, Bing and others.

- Results are injected directly into the model context.

- Web browsing:

- You can pass a URL using

#https://...and the system will pull content from that site into the conversation.

- You can pass a URL using

This makes it ideal for:

- Internal documentation assistants.

- Research-oriented agents.

- Internal support tools and knowledge bases.

5. Plugins, pipelines and native Python tools

For developers and platform teams, Open WebUI offers:

- Pipelines / Plugin framework:

- A way to inject your own logic and Python libraries.

- Enables things like:

- Custom function calling.

- Per-user rate limiting.

- Usage monitoring (e.g. via Langfuse).

- Live translation (e.g. with LibreTranslate).

- Toxic message filtering.

- Native Python tooling:

- Built-in code editor in the tools workspace.

- “Bring Your Own Functions” (BYOF): you can expose plain Python functions as tools to the LLM.

Effectively, you can turn Open WebUI into a corporate agent platform with your business logic baked in.

6. A polished user experience

Despite being a very technical tool under the hood, the UX is surprisingly friendly:

- Responsive UI for desktop, laptop and mobile.

- PWA support to “install it as an app” on mobile, even when running locally.

- Full Markdown and LaTeX support.

- Integrated voice and video calls.

- Multilingual support (i18n) and an active community adding new languages.

For internal deployments, this matters a lot: users adopt it quickly, with minimal training.

Quick install: Docker in one line

If you already have Ollama on the same machine:

docker run -d \

-p 3000:8080 \

--add-host=host.docker.internal:host-gateway \

-v open-webui:/app/backend/data \

--name open-webui \

--restart always \

ghcr.io/open-webui/open-webui:main

Code language: JavaScript (javascript)If you only want to use the OpenAI API:

docker run -d \

-p 3000:8080 \

-e OPENAI_API_KEY=your_api_key \

-v open-webui:/app/backend/data \

--name open-webui \

--restart always \

ghcr.io/open-webui/open-webui:main

Code language: JavaScript (javascript)If you prefer Open WebUI + Ollama in a single container (CPU only):

docker run -d \

-p 3000:8080 \

-v ollama:/root/.ollama \

-v open-webui:/app/backend/data \

--name open-webui \

--restart always \

ghcr.io/open-webui/open-webui:ollama

Code language: JavaScript (javascript)For GPU support, just add --gpus all to the docker run command.

What kind of projects is Open WebUI best suited for?

Open WebUI is especially compelling if:

- You want to build your own internal ChatGPT-like assistant using local or self-hosted models.

- You care deeply about data control, compliance and sovereignty (on-prem, private cloud, your own VPS, etc.).

- Your IT team prefers self-hosted, IdP-integrated solutions that are easy to observe and monitor.

- You want a single frontend even if you use multiple models and providers.

- You’re working on:

- Internal support assistants.

- Documentation and technical knowledge tools.

- Productivity assistants for dev, support, or business teams.

- Educational environments or AI labs.