Anthropic is reportedly preparing a new Claude Code feature called Bugcrawl, aimed at analysing entire repositories in search of programming errors and possible logic flaws. The tool has not yet appeared as a stable feature and has not been officially announced by the company, but the first references spotted by TestingCatalog point to another step in the evolution of Claude Code from a programming assistant into a review, security and automation platform for development teams.

The idea is easy to explain, although much harder to execute well: let Claude crawl through a codebase, identify problems, suggest fixes and help teams turn that analysis into actionable work. If its launch is confirmed, Bugcrawl would sit between pull request review features and the security analysis capabilities Anthropic has already added to Claude Code in recent months.

A feature still in testing, not an official launch

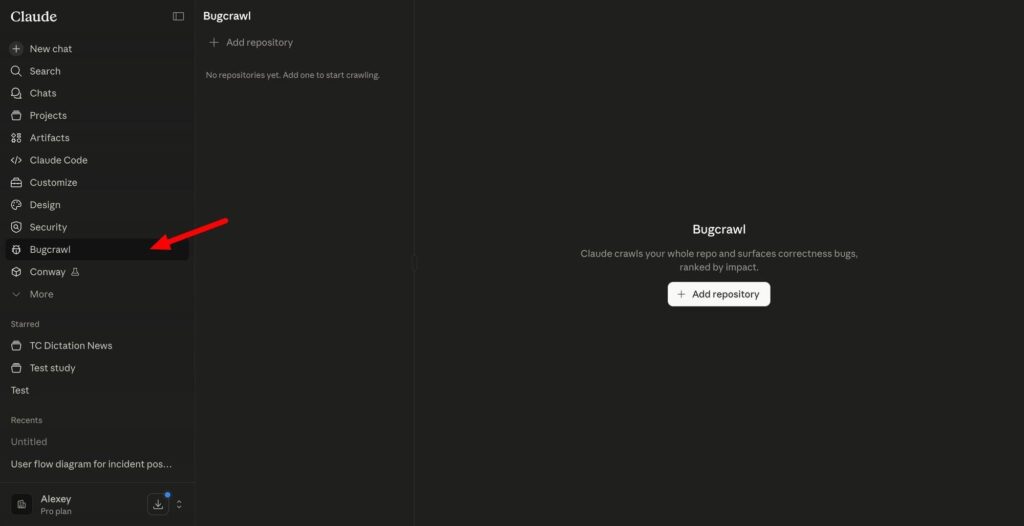

The available information suggests that Bugcrawl appears as a dedicated entry in Claude Code’s side navigation. When opened, the interface reportedly shows a repository selector and a clear warning: the analysis consumes tokens at a high rate, so Anthropic recommends starting with small repositories before moving on to larger projects.

That warning offers an important clue about the type of work the tool would perform. This would not be a simple review of a few modified lines, but a broader exploration of the source code, with the ability to examine relationships between files, execution flows, internal dependencies and possible errors that do not always appear in a pull request diff.

TestingCatalog interprets Bugcrawl as a feature that could let Claude loose across an entire repository to search for general bugs and propose fixes. Its approach would differ from Claude Code Security, which focuses on vulnerabilities, and from Code Review, which analyses specific changes in pull requests. Bugcrawl would cover a broader category: quality issues, regressions, logic errors, broken edge cases and unexpected behaviour.

It is worth stressing that, for now, there is no confirmed release date or public Anthropic documentation for Bugcrawl. The feature appears to be in testing or preparation, so its final capabilities, availability, pricing and limits could change before it reaches users.

How Bugcrawl fits into Claude Code’s strategy

Claude Code has become one of Anthropic’s most important products for the developer market. The tool already lets users work with code from the terminal, integrate with GitHub, respond to mentions in issues or pull requests, create changes, explain codebases and automate tasks within the development workflow.

In February 2026, Anthropic introduced Claude Code Security in a limited research preview. The company described it as a capability for scanning codebases, detecting security vulnerabilities and suggesting targeted patches for human review. The idea was not to replace security teams, but to provide a system capable of reasoning about code with more context than a traditional static analysis tool.

Code Review followed later, a Claude Code feature that analyses pull requests and posts inline comments when it finds problems. According to Anthropic’s documentation, this review uses a fleet of specialised agents that inspect changes in the context of the entire repository, looking for logic errors, vulnerabilities, broken edge cases and subtle regressions.

Bugcrawl would fit as a third layer. Security looks for vulnerabilities. Code Review looks at specific changes before they are merged. Bugcrawl could look at the project more broadly, even without waiting for someone to open a pull request. That would bring it closer to a continuous software quality auditing system, although with the computational cost of an agent that needs to read, reason over and compare many parts of a repository.

For engineering teams, the potential value lies in detecting errors before they reach production. Many bugs are not clear vulnerabilities and do not appear in unit tests. They can live in a poorly written condition, incomplete validation, an interaction between modules, a data migration, a user interface flow or an old assumption that is no longer true. Code agents have room to help in that area, as long as their findings are reviewable and do not generate excessive noise.

Token cost will be one of the key issues

The warning about high token consumption is not a minor detail. Analysing an entire repository means reading many files, maintaining context, forming hypotheses, checking relationships and, in some cases, proposing changes. All of that has a cost. The larger the project, the higher the token volume needed for a useful review.

That is why Bugcrawl seems likely to be aimed, at least initially, at teams on Team or Enterprise plans, where the usage cost may make more sense compared with the value of finding bugs before production. For an individual developer, running a full crawl on a large repository could be expensive or impractical unless there are clear limits, partial modes or configurable analysis criteria.

The question will not only be how much it costs, but how it can be controlled. A tool of this kind would need to support analysis by folders, branches, modules, languages, critical paths or types of issue. It would also have to distinguish between real problems, debatable recommendations and simple style preferences. If each crawl generates dozens of low-quality warnings, teams will stop using it. If it finds fewer issues but explains them well, it could become a useful part of the development workflow.

It will also be important to see how Anthropic handles human review. In software, an AI-suggested fix should not be applied without oversight. A change that looks reasonable can break compatibility, alter an API, introduce a regression or fix a symptom without addressing the root cause. The best use of Bugcrawl, if it reaches the market, would be as a detection and prioritisation system, not as an unsupervised autopilot.

Competition among programming agents

Anthropic’s move fits into a broader race. OpenAI is pushing Codex and its development agents, Google is working on Jules, xAI is moving forward with Grok Build, and several startups are trying to occupy the space between IDEs, code review, testing, security and DevOps automation. Programming has become one of the areas where generative AI offers one of the clearest returns, but also one where mistakes can have more direct consequences.

Current models already help write functions, explain legacy code, generate tests and review changes. The next stage is for them to reason across entire repositories and work with greater autonomy. Bugcrawl would move precisely in that direction: instead of waiting for a specific developer question, it would launch a broader inspection and return findings.

The difference between a useful tool and a risky one will depend on accuracy, context and traceability. A good report must explain why something is a bug, where it happens, what impact it may have, how to reproduce it and what change it proposes. It must also acknowledge uncertainty. In real codebases, many decisions are shaped by historical compromises, external dependencies or business requirements that are not always written in the repository.

Anthropic appears to be building Claude Code as a working layer for professional teams, not just as a chatbot that writes code snippets. Bugcrawl, if ultimately confirmed, would reinforce that reading. The promise is not that AI will code alone, but that it will help teams find bugs, review more effectively and reduce some of the repetitive work that consumes hours before every deployment.

The interest is obvious. So is the need for caution. An AI-based bug crawler may find errors that go unnoticed, but it does not replace testing, technical review, observability or product knowledge. In software engineering, trust is earned through repeated results, low false positives and fixes that hold up in production. Bugcrawl will have to prove exactly that.

Frequently asked questions

What is Anthropic Bugcrawl?

Bugcrawl appears to be a Claude Code feature in testing that would analyse entire repositories and search for programming errors, regressions or logic flaws.

Has Anthropic officially launched Bugcrawl?

No. There is no official announcement or public release date. The feature has been spotted in testing and appears to be not generally available yet.

How is it different from Claude Code Security?

Claude Code Security focuses on security vulnerabilities. Bugcrawl would target general bugs and code quality issues, a broader category.

Why could Bugcrawl consume a lot of tokens?

Because reviewing an entire repository requires reading many files, maintaining context across them, reasoning about internal dependencies and generating findings with possible fixes.