The Proxmox ecosystem continues to attract tools designed for administrators who want to work faster from the terminal, without giving up security controls or integration with modern automation workflows. One of the latest proposals is proxxx, an open source project by Fabrizio Salmi that presents itself as a “terminal cockpit” for Proxmox VE and Proxmox Backup Server.

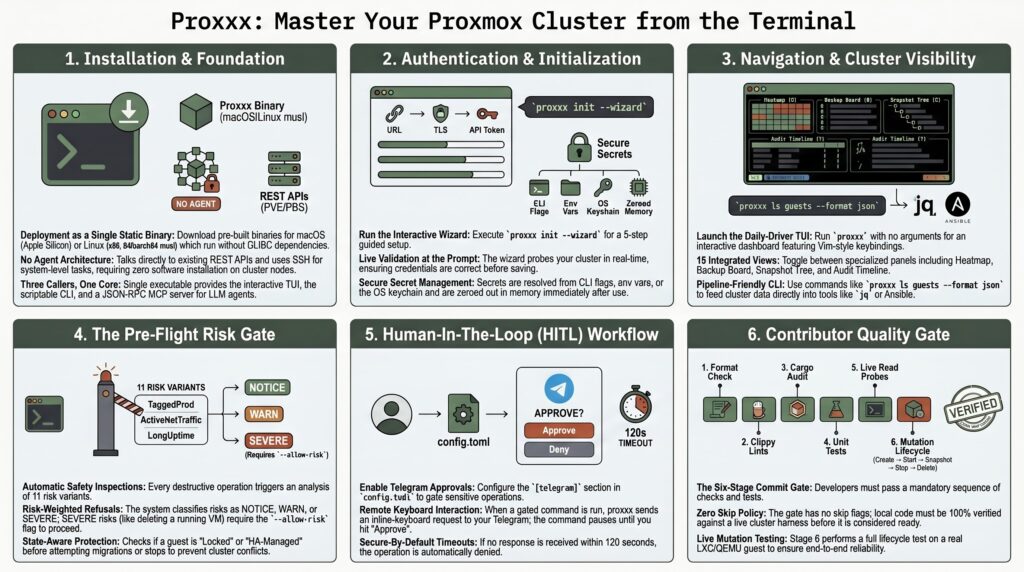

The idea is straightforward: a single binary written in Rust that combines a CLI, TUI, MCP server, alert daemon and human approval system, without installing agents on the cluster. Instead of asking the administrator to deploy another persistent service inside the infrastructure, proxxx talks to what already exists: it uses REST against Proxmox VE and Proxmox Backup Server, and relies on SSH for tasks not covered by the API.

A tool for those who live in the terminal

Proxmox VE already has a mature and comprehensive web interface. Precisely for that reason, proxxx does not try to replace it. Its focus is on a different type of use: administrators, SRE teams, DevOps profiles and homelab operators who need to query, filter, execute and automate tasks from the console, with stable JSON output and predictable commands.

The project brings together several layers in a single executable. It can list nodes and machines, navigate the cluster from a TUI interface, launch operations such as start, stop, migration, snapshot, cloning, backup or disk move, and export results in JSON format for integration with tools such as jq or CI pipelines. It also offers console access via SSH, serial, SPICE and noVNC, although in the last two cases it acts as a bridge to external tools or the browser, not as its own graphical viewer.

The choice of Rust does not seem accidental. proxxx aims to be distributed as a static binary, without an installer and with few external dependencies. According to the repository documentation, prebuilt builds are available for macOS on Apple Silicon and Linux x86_64-musl, while ARM64 Linux is built from source due to a toolchain issue documented by the project. The Linux musl artifact is presented as statically linked, with the aim of running across very different distributions without depending on a specific GLIBC version.

| Area | What proxxx provides |

|---|---|

| Daily management | TUI with search, views for nodes, guests, storage, backups and operation queue |

| Automation | CLI with JSON output, stable exit codes and script-friendly commands |

| Operational security | Pre-flight risk gate, human approval via Telegram and policies by tag, VMID or wildcard |

| Proxmox Backup Server | REST browsing and restore through supervised proxmox-backup-client |

| Artificial Intelligence | MCP server over stdio with a fixed registry of 10 tools for LLM agents |

| Supply chain | SHA-256 checksums, keyless signing with Sigstore/cosign and CycloneDX SBOM |

The key difference: controls before touching production

The most interesting part of proxxx is not listing virtual machines, but how it handles destructive operations. The project includes a “pre-flight risk gate” with 11 risk variants, including locked machines, running guests, HA-managed resources, long uptime, production tags or active network traffic. If an operation looks dangerous, the tool can refuse to execute it unless the operator explicitly forces permission with a specific option.

On top of that layer, it adds HITL, short for human-in-the-loop. In practice, this means certain actions can require human approval through Telegram. If a policy matches, the operation remains pending; if nobody approves it within 120 seconds, it is denied by default. This detail matters because it prevents lack of response from becoming implicit permission, something especially sensitive when automation systems or Artificial Intelligence agents are connected to real infrastructure.

The MCP integration points precisely to that new scenario. proxxx includes a stdio JSON-RPC server with a fixed registry of 10 tools and a SHA-256 fingerprint for auditing. Its goal is to allow assistants or LLM agents to interact with a Proxmox cluster in a bounded way, with a tool surface closed at compile time and risk controls before executing changes. At a time when many teams are testing agents for administration tasks, this type of barrier can make the difference between an eye-catching demo and reasonable use in real systems.

There is also care in secret management. The configuration can resolve credentials through command-line flags, environment variables, a file with 0600 permissions, TOML or the operating system keychain. Loaded values are stored in Zeroizing<String>, so they are wiped from memory when dropped. This does not automatically make the tool suitable for every regulated environment, but it does show technical concern for details that many small projects leave for later.

Proxmox, PBS and the rise of lightweight operations tools

The interest around proxxx comes at a favourable time for Proxmox. The platform has strengthened its position as an open alternative for virtualisation, especially among organisations looking to reduce dependence on proprietary stacks or review costs. Proxmox VE combines KVM virtual machines, LXC containers, storage, networking, high availability and web-based administration; Proxmox Backup Server adds deduplicated backups, integration with Proxmox VE and restore capabilities from a specialised environment.

The official Proxmox Backup Server documentation notes that PBS can be integrated into a Proxmox VE node or cluster as storage, both from the web interface and from the command line. That native integration is one of the reasons tools like proxxx can build value on top without replacing core components: they rely on existing APIs, commands and workflows.

The repository also clearly defines what it does not intend to do. proxxx does not aim to be a GUI, does not render SPICE or VNC, does not rewrite internal Proxmox algorithms in Rust when the source of truth is in Perl tools on the node, does not manage destructive Ceph writes, does not touch SDN configuration and does not try to solve browser-oriented authentication flows such as U2F/WebAuthn or OIDC. That list of limits is almost as relevant as the features, because it reduces unrealistic expectations and helps explain where the tool fits.

The quality of the development process is another notable element. The project declares a six-stage gate for every commit: formatting, Clippy in strict mode, dependency audit, tests, 88 read-only probes against a real cluster and a mutation lifecycle with LXC, QEMU and cluster operations. In production code, the Clippy configuration denies patterns such as unwrap, expect, panic or todo, a decision aligned with the idea of a tool that can execute sensitive operations.

proxxx is still a young project with a limited scope. It should not be read as a replacement for Proxmox Datacenter Manager, the official web interface or established automation tools such as Ansible or Terraform. Its value lies elsewhere: offering a terminal cockpit, with fast commands, TUI views, supply-chain auditing and explicit controls before acting on real machines.

For administrators who work daily with Proxmox VE and Proxmox Backup Server, the proposal is appealing because it matches a very specific way of operating: fewer clicks, more terminal, more JSON and more caution before deleting, migrating or modifying a critical workload. The key will be to assess its maturity on real clusters, review the code, validate the releases and test it first in non-production environments.

Frequently asked questions

What is proxxx?

proxxx is an open source tool for managing Proxmox VE and Proxmox Backup Server from the terminal. It combines a CLI, TUI, MCP server, alerts and human approvals in a single binary written in Rust.

Does it replace the Proxmox web interface?

No. The project itself states that it does not aim to replace the Proxmox web UI. It is designed for operators who prefer terminal workflows, scripts, JSON output and repeatable processes.

Does it require installing an agent on the cluster?

No. proxxx works against the REST APIs of Proxmox VE and Proxmox Backup Server, and uses SSH for tasks that are not exposed through REST.

Why is the MCP integration relevant?

Because it allows agents or assistants based on language models to connect to a defined, auditable and limited tool surface, adding controls before executing changes on the cluster.