TCP (Transmission Control Protocol) and UDP (User Datagram Protocol) are the two foundational transports in the TCP/IP stack. Both ride over IP and use ports to multiplex applications, but their semantics, guarantees, and overhead differ dramatically. This technical guide unpacks how they work under the hood, what guarantees they provide, which problems they solve (and which they don’t), and how to measure and tune each one in production.

1) High-Level View

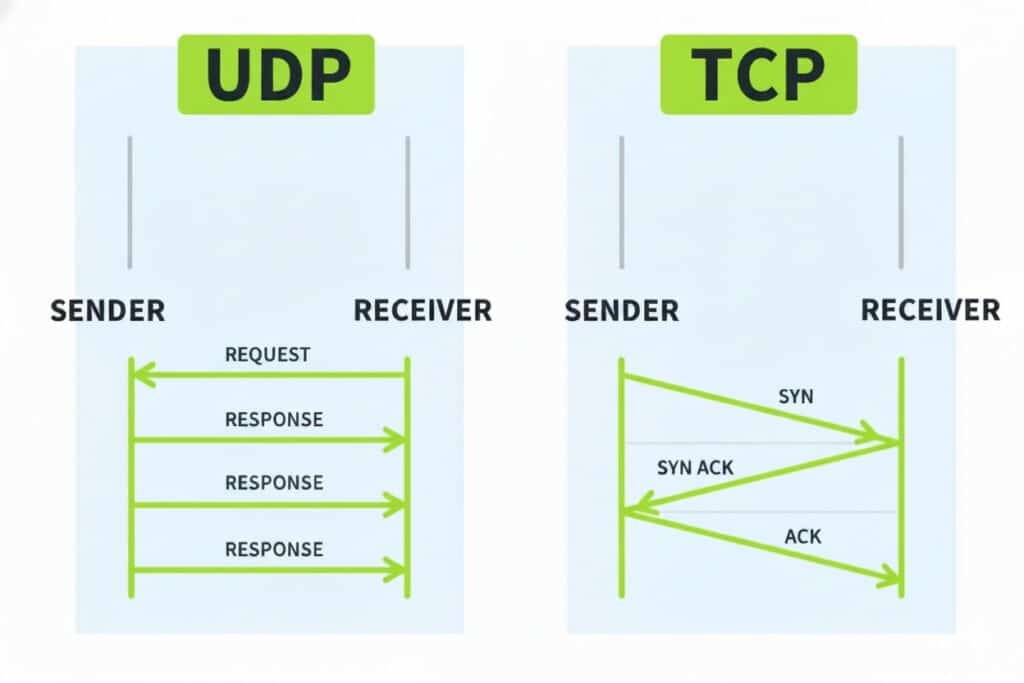

- UDP favors low latency and minimal overhead. It does not establish a connection, confirm deliveries, order packets, or retransmit losses. It shines when getting there fast matters more than getting there always (voice, interactive video, real-time gaming, DNS, lightweight telemetry) or when reliability logic lives in the application (custom FEC/ARQ, QUIC/HTTP/3).

- TCP favors reliability and control. It establishes a connection (three-way handshake), delivers a byte-stream in order and without duplicates, retransmits losses, regulates the sender (flow control), and protects the network (congestion control). It underpins HTTP/1.1, HTTP/2 (TLS), SMTP, IMAP, FTP and any workload that needs data integrity.

2) Headers & Fields (What Wireshark Shows)

UDP — Fixed 8 Bytes

0 7 8 15 16 23 24 31

+--------+--------+--------+--------+

| SrcPort| DstPort| Length | Check |

+--------+--------+--------+--------+

- Source/Destination Port (16b): app multiplexing.

- Length (16b): header + payload.

- Checksum (16b): mandatory in IPv6, optional in IPv4 (recommended). Includes IP pseudo-header to catch misdelivery/corruption.

No options; simplicity is its virtue (and its limit).

TCP — 20 Bytes Base + Options

0 7 8 15 16 31

+--------+--------+----------------+

| SrcPort| DstPort| Sequence Num |

+--------+--------+----------------+

| Acknowledgment Number |

+---+----+--------+----+----------+

|HL | RS | Flags |Win | Check |Urg|

+---+----+--------+----+----------+

| Options (MSS, WS, SACK, TS) |

+---------------------------------+

Code language: JavaScript (javascript)- Sequence/Ack Numbers (32b): ordering & acknowledgments.

- Data Offset (HL): header length.

- Flags: SYN, ACK, FIN, RST, PSH, URG (plus NS, CWR, ECE).

- Window Size (16b): flow control (receiver buffer).

- Checksum: mandatory (with IP pseudo-header).

- Options (variable): MSS, Window Scale (WS), SACK Permitted and SACK blocks, Timestamps (TS), NOP, etc.

3) Connection Establishment & Teardown (TCP)

Three-Way Handshake

- SYN → client announces ISN and options (MSS, WS, SACK, TS).

- SYN-ACK ← server confirms and proposes its options.

- ACK → connection established; byte-stream begins.

Teardown

- FIN/ACK in each direction (half-closes). Expect TIME_WAIT (common on the closing side), essential to avoid late duplicates.

- RST aborts immediately (error).

Key states: LISTEN, SYN_SENT, SYN_RECEIVED, ESTABLISHED, FIN_WAIT_1/2, CLOSE_WAIT, CLOSING, LAST_ACK, TIME_WAIT.

4) Guarantees & Mechanisms (TCP)

- Reliability: checksum + retransmission. A lost segment is detected (timeouts or triple duplicate ACK) and resent.

- Ordering: strict in-order delivery to the app (no “gaps”). This causes Head-of-Line (HOL) blocking: a loss stalls subsequent data until recovered.

- Flow control: receiver advertises window to avoid buffer overflow.

- Congestion control: protects the network by adjusting cwnd (congestion window). Notable algorithms:

- Reno/NewReno: AIMD, Slow Start, Fast Retransmit/Recovery.

- CUBIC (Linux default): cubic growth, strong for high BDP paths.

- BBR: estimates bandwidth and RTT to avoid queues and fill the pipe without relying on loss.

- Timers: RTO (Retransmission Timeout) based on RTT & variance (Jacobson/Karels), Karn’s Algorithm (don’t sample RTT on retransmits), Delayed ACK (slightly delay ACKs to piggyback and reduce overhead).

- Important options:

- MSS: maximum segment size (sans IP/TCP headers). Prevents IP fragmentation.

- Window Scale: extends window > 65,535 (vital for high BDP).

- SACK: selective acknowledgments for fast recovery with multiple losses.

- Timestamps: precise RTT measurement, PAWS (reordering protection).

5) Performance & MTU/MSS

- MTU (e.g., 1,500 on Ethernet) caps IP frame size. Ideal MSS ≈ MTU − 40 (IPv4+TCP no options) or MTU − 60 (IPv6+TCP).

- Enable PMTUD (Path MTU Discovery) to avoid IP fragmentation. Don’t block ICMP Fragmentation Needed.

- For VPN/GRE/WireGuard, clamp MSS (e.g., 1,360–1,420) to account for tunnel overheads.

- Offloads: TSO/GSO/GRO reduce CPU by segmenting/coalescing in NIC/stack.

6) UDP for Real: When and How

- Multicast: UDP supports unicast, multicast, broadcast (TCP only unicast). Critical for discovery (mDNS/SSDP), routing (OSPF, RIP), streaming (RTP).

- Real-time media: voice/interactive video tolerates small loss but not added latency from retransmits; UDP underpins RTP, VoIP, WebRTC (with DTLS/SRTP up the stack).

- DNS: UDP by default (fast); if response is truncated (TC bit) or large (DNSSEC/EDNS0), retry over TCP.

- App-level reliability: if you need it, add FEC/ARQ, sequencing, and timers in-app (or use QUIC, see below).

Checksum: In IPv6, UDP checksum is mandatory; in IPv4 it’s optional (better enable it).

7) QUIC/HTTP-3: Reliability over UDP (and Why It Matters)

- QUIC implements reliability, congestion control, and security (TLS 1.3 built-in) in user space over UDP. It avoids HOL blocking at the app level (multiplexed streams), supports connection migration (IP/port changes without breaking), and speeds up handshakes.

- HTTP/3 runs on QUIC. Net effect: lower start-up latency and better loss/reorder behavior than TCP+TLS+HTTP/2.

8) Security, NAT & Middleboxes

- TLS vs. DTLS: TLS (TCP) for reliable streams; DTLS (UDP) for real-time with encryption.

- Attacks:

- SYN flood (TCP): mitigate with SYN cookies, backlog tuning, BPF.

- UDP amplification (DNS, NTP, Memcached): deploy BCP 38 (ingress filtering), rate limiting, DNS RRL.

- NAT:

- TCP: NAT tracks state (SYN→ESTABLISHED→TIME_WAIT); mappings persist longer.

- UDP: mappings expire quickly; use keepalives (STUN/ICE) for RTC.

- Firewalls: stateful tracking follows TCP’s sequence; for UDP it relies on timeouts and explicit rules.

- Multicast: cross L3 with IGMP/MLD (access) and PIM (core). NATs often block it by default.

9) Measurement & Observability

- tcpdump/Wireshark:

- TCP handshake:

tcp.flags.syn==1 && tcp.flags.ack==0, initial RTT, MSS/WS/SACK/TS options. - UDP: ports, length, checksum; infer loss from app-level behavior.

- TCP handshake:

- ss / netstat: states ESTABLISHED, TIME_WAIT, CLOSE_WAIT; tx/rx queues.

- iperf3:

- TCP: measures throughput with real congestion control.

- UDP: set target bitrate; observe jitter and loss (

-u -b 100M -l 1400 -R).

- Key metrics:

- TCP: RTT, RTO, cwnd, retransmissions, out-of-order, SACKed, zero-window, p99 latency.

- UDP: loss %, jitter, reorder, arrival variance.

10) Tuning (Carefully)

TCP

- Congestion: CUBIC (Linux default); consider BBR for high-BDP WANs (

net.ipv4.tcp_congestion_control=bbr). - Buffers:

tcp_rmem/tcp_wmem— Linux auto-tuning is usually solid. - Nagle/Delay:

TCP_NODELAY(lower latency) vs. Nagle (aggregation); delayed ACKs can raise latency for chatty apps (evaluate). - Keepalive: tune (

tcp_keepalive_*) to NAT/ALB policies. - TIME_WAIT: don’t nuke it blindly; use ephemeral port ranges, SO_REUSEADDR/PORT, horizontal scale.

UDP

- Buffers: raise

SO_RCVBUF/SO_SNDBUFfor high-rate flows; enable GRO/GSO if NIC/OS supports. - Rate control: do it in-app (token-bucket), adapting to feedback (loss/jitter).

- NAT keepalive: lightweight periodic packets (STUN/ICE) for RTC.

11) Practical Patterns

- Live interactive streaming → UDP/QUIC + FEC + rate control; prioritize latency/jitter over tiny loss.

- File delivery/backup → TCP with SACK, Window Scale, BBR on WAN; correct PMTUD.

- Authoritative/recursive DNS → UDP by default; TCP fallback (truncation, DNSSEC); consider DoT/DoH.

- Web APIs → HTTP/2 over TCP or HTTP/3 (QUIC) if you control clients and want faster start/mobility.

- Online gaming → UDP with state reconstruction and client prediction; avoid HOL.

12) Comparison Table (At a Glance)

| Dimension | TCP | UDP |

|---|---|---|

| Connection | Yes (handshake) | No |

| Reliability | Yes (retransmit, in-order) | No (app’s job if needed) |

| Ordering | Guaranteed (byte-stream) | No (reorder possible) |

| Congestion control | Yes (Reno/CUBIC/BBR…) | No (app/QUIC must do it) |

| Latency | Higher (HOL, handshake, control) | Lower (no handshake, no HOL) |

| Multicast | No | Yes |

| Built-in security | TLS (over TCP) | DTLS (over UDP); QUIC integrates TLS |

| Typical use | Web, mail, DBs, file transfer | RTP/VoIP, gaming, DNS, telemetry, QUIC/HTTP-3 |

Conclusions

- TCP is the default when you need integrity and in-order delivery, and added latency is acceptable. Real throughput hinges on RTT, BDP, options (MSS/WS/SACK/TS), and congestion algorithm.

- UDP is your tool when low latency or multicast is paramount, or when the app already implements reliability (QUIC, FEC/ARQ). Success depends on rate control, buffers, and tolerance to loss/jitter.

- HTTP/3/QUIC blurs the old boundary: reliability and security over UDP, fixing HOL at the stream level and speeding up handshakes.

Choosing well isn’t “TCP vs. UDP”, but which guarantees you need and where to implement them: network, transport, or application. Measure (RTT, loss, jitter, p99), tune prudently, and validate in production with iperf, tcpdump/Wireshark, and metrics. That’s how transports work for your service—rather than the other way around.