For decades, Ethernet has been the quiet technology on which much of the Internet and data centers have been built. It has moved packets, connected servers, and supported enterprise applications, storage, virtualization, IP telephony, and cloud. Its basic philosophy was familiar to any network engineer: transport traffic efficiently, accept that a packet might occasionally be lost, and let protocols such as TCP handle recovery.

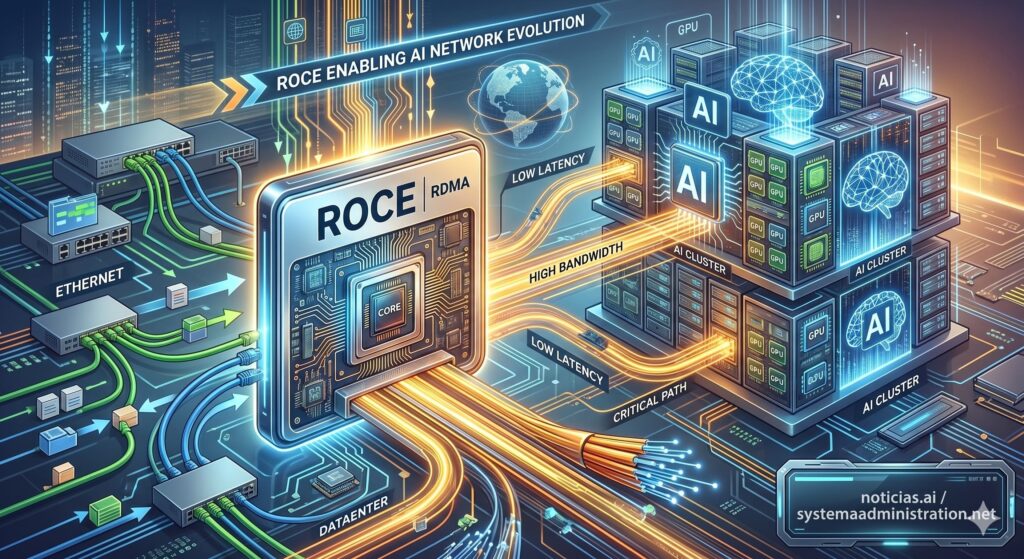

Large-scale artificial intelligence is changing that relationship. Clusters with thousands or tens of thousands of GPUs do not just need bandwidth. They need to move data between accelerators with very low latency, precise synchronization, and as little involvement as possible from the operating system and CPU. This is where RoCE, RDMA over Converged Ethernet, comes in: a technology that enables direct remote memory access over Ethernet. Put simply, it is no longer just about sending packets, but about allowing nodes in a cluster to exchange data almost as if the memory were closer than it really is.

This shift is forcing Ethernet to behave in a much more demanding way. Traditional networking was “best effort”. RoCE, by contrast, needs a practically lossless fabric, with controlled congestion, properly sized buffers, accurate telemetry, and end-to-end tuning. It is the same family of switches, cables, and commands that many teams already know, but the mental model is different.

From best-effort networking to a lossless fabric

The technical reason is clear. RDMA reduces the involvement of the CPU and kernel in data transfers. That helps improve latency, throughput, and efficiency, which is critical in distributed training, large-scale inference, and HPC. Google Cloud explains this by comparing the traditional network flow, where the operating system processes the data and the NIC sends it, with RoCEv2, which extends RDMA capabilities over Ethernet for AI and scientific computing workloads. In its A3 Ultra and A4 machines, Google uses RoCEv2 for node-to-node communication and GPU-to-GPU connectivity, with up to 3.2 Tbps of inter-node GPU-to-GPU traffic on A3 Ultra.

The problem is that RDMA does not tolerate a poorly tuned network well. In conventional traffic, occasional packet loss can be corrected through retransmissions. In large-scale RDMA traffic, loss, congestion, or reordering can affect thousands of coordinated operations between GPUs. That is why people talk about lossless or near-lossless Ethernet: not because physics has disappeared, but because the fabric is designed to avoid drops in critical traffic classes.

That is where two technologies many network engineers already know become much more important. PFC, Priority Flow Control, selectively pauses a traffic class when a queue fills up, preventing frames of that priority from being dropped. ECN, Explicit Congestion Notification, marks packets before drops occur so that the sender slows down. Cisco summarizes the relationship well: PFC and ECN complement each other for congestion management and must be enabled end to end, both on hosts and network nodes, to support lossless traffic.

The nuance matters. PFC is not magic. If configured badly, it can create head-of-line blocking, congestion spreading, or problems that are hard to diagnose. ECN requires appropriate thresholds, endpoint participation, and a careful reading of traffic patterns. In an AI cluster, a small tuning mistake can result in persistent queues, queuing latency, degraded training, or intermittent failures that look random.

Meta, Google, and NVIDIA show that Ethernet is already in production

RoCE’s validation does not come only from vendors. Meta published a technical paper at SIGCOMM on RDMA over Ethernet for large-scale distributed training. In it, the company explains that its RoCE clusters support thousands of GPUs and production workloads for ranking, recommendation, content understanding, natural language processing, and generative AI. Meta states that, with careful topology, routing, transport, and operations design, RoCE can support AI training at scale.

Meta also detailed two clusters of 24,576 NVIDIA H100 GPUs each for Llama 3 training. One uses a RoCE fabric based on Arista 7800 with OCP Wedge400 and Minipack2 switches; the other uses NVIDIA Quantum-2 InfiniBand. Both interconnect endpoints at 400 Gbps. That comparison matters because it shows that RoCE is no longer an experimental option for small labs, but an architecture that major platforms use in production for frontline AI workloads.

NVIDIA has also responded to this trend with Spectrum-X, its Ethernet platform for AI. The company presents Spectrum-X as a high-performance Ethernet network for GPU-to-GPU communication, with SuperNICs providing RoCE connectivity between GPU servers, congestion control, telemetry, and performance isolation. Even NVIDIA, historically very strong in InfiniBand after acquiring Mellanox, is pushing Ethernet as a central part of its AI fabrics.

Broadcom, meanwhile, is strengthening its AI Ethernet portfolio with switches, optics, retimers, DSPs, and solutions designed for gigawatt-scale AI clusters. In its fiscal first-quarter 2026 results, the company reported $8.4 billion in AI revenue, up 106% year over year, driven by custom accelerators and AI networking. In March, it also presented at OFC 2026 a portfolio aimed at gigawatt-scale AI clusters and the transition toward the 200T era.

Some industry analyses suggest Ethernet/RoCE is gaining ground against InfiniBand in new AI deployments. The 70% figure appears in market publications, although it should be treated with caution when it does not come from an audited official breakdown. What does seem clear is the direction of travel: hyperscalers want performance, but also openness, supplier diversity, controlled costs, and less dependence on a closed stack.

The new role of the network engineer

The deeper change is cultural. The engineer who ten years ago designed VLANs, QoS for voice, LACP aggregations, and leaf-spine networks for virtualization now faces fabrics where every microsecond counts. The configuration may still look familiar, but the goal has changed. It is no longer just about the network being “up” and having enough capacity. It is about ensuring that a collective training operation does not degrade because an incast flow fills a buffer, because ECN marks too late, or because PFC pauses too much traffic.

In these environments, traditional metrics are not enough. Teams need to observe queues, ECN marks, PFC events, drops by priority, rail utilization, hash entropy, microbursts, queuing latency, and NCCL behavior. They also need to coordinate host, NIC, switch, driver, firmware, operating system, scheduler, and physical topology. RoCE is a networking technology, but its real-world performance depends on the entire stack.

Ultra Ethernet adds another piece to the puzzle. The UE 1.0 specification aims to define a high-performance Ethernet for AI and HPC, compatible with the existing Ethernet ecosystem. Its authors note that RoCEv2 made it possible to bring InfiniBand-like semantics to Ethernet, but also carries limitations: dependence on PFC, strictly in-order delivery, blocking risks, and less flexible load balancing. Ultra Ethernet attempts to modernize that transport with new ideas around multipath, out-of-order delivery, and more scalable mechanisms for extreme systems.

This does not mean RoCE is going away. On the contrary, it is the bridge technology bringing Ethernet into the heart of AI clusters. But it does show that the industry understands its limits and is working on an evolution better suited to hundreds of thousands, or even millions, of accelerators.

InfiniBand is not dead, but Ethernet changes the economics

InfiniBand remains an excellent technology for HPC and AI. It has a long tradition in high-performance clusters, low latency, mature RDMA semantics, and a tightly integrated ecosystem. For many deployments, especially when extreme performance is required with a closed and validated stack, it will continue to make sense.

Ethernet’s advantage is different: industrial scale, installed base, supplier diversity, familiar operational tools, and a vast market. Broadcom expressed this directly when presenting Tomahawk 6: its chips use Ethernet, a decades-old standard, and the company argues that large AI networks can be built on this technology without resorting to more “exotic” solutions. Reuters reported that Tomahawk 6 is designed for AI data centers with more than 100,000 GPUs and that Broadcom expects future scenarios with up to one million GPUs in a single physical building.

The real decision will not be InfiniBand or Ethernet in the abstract. It will depend on scale, cost, vendor, software, availability, team experience, latency targets, operating model, and tolerance for lock-in. But RoCE has changed the conversation: Ethernet is no longer just the general-purpose data center network; it is a serious candidate for the critical network used in training and inference.

For infrastructure teams, the conclusion is direct. Deep knowledge of lossless Ethernet, PFC, ECN, buffers, congestion, telemetry, and RDMA fabric operations will become an increasingly valuable skill. It is not learned by reading a data sheet or copying a reference configuration. It is learned in the lab, by breaking things, measuring queues, adjusting thresholds, and understanding how real workloads behave.

AI has brought the network back to the center of infrastructure design. For years, much of the attention focused on CPUs, GPUs, storage, and virtualization. Now the performance of a cluster depends as much on how its accelerators communicate as on how much compute power they have. Ethernet has been the highway of modern computing. With RoCE, we are asking it to behave like a racetrack.

Frequently asked questions

What is RoCE?

RoCE stands for RDMA over Converged Ethernet. It enables direct remote memory access over Ethernet, reducing the involvement of the CPU and operating system in transfers between servers.

Why does RoCE need a lossless network?

Because RDMA workloads, especially distributed AI training, are highly sensitive to packet loss, congestion, and latency. The network must avoid drops in critical traffic by using mechanisms such as PFC and ECN.

What role do PFC and ECN play?

PFC pauses specific traffic classes when there is congestion in a queue. ECN marks packets before drops occur so that the sender slows down. Together, they help build Ethernet fabrics suitable for RDMA.

Will Ethernet completely replace InfiniBand?

Not necessarily. InfiniBand will remain relevant in many high-performance environments. But Ethernet with RoCE, and future technologies such as Ultra Ethernet, is gaining ground because of openness, scale, and supplier diversity.